article by Amit caesar

Mark Zuckerberg announced last week that his company would change its name from Facebook to Meta, with a new emphasis on creating the metaverse.

Creating a sense of presence in the virtual world will be a defining feature of this metaverse. Presence could simply be defined as interacting with other avatars and feeling immersed in a foreign environment. It could also entail creating some sort of haptic feedback for users when they touch or interact with virtual world objects. (When you hit a ball in Wii tennis, your controller used to vibrate in a primitive form of this.)

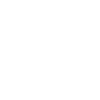

As part of this, Meta AI, a division of Meta, wants to use a robot finger sensor called DIGIT and a robot skin called ReSkin to help machines learn how to touch and feel like humans.

In a Facebook post today, Meta CEO Mark Zuckerberg said, "We designed a high-res touch sensor and worked with Carnegie Mellon to create a thin robot skin." "We're getting closer to having realistic virtual objects and physical interactions in the metaverse now."

Robot touch, according to Meta AI, is an intriguing research domain that can help artificial intelligence improve by receiving feedback from the environment. Meta AI hopes to advance the field of robotics by working on this touch-based research, as well as use this technology to incorporate a sense of touch into the metaverse in the future.

“People in AI research are trying to get the full loop of perception, reasoning, planning, and action, as well as getting feedback from the environment,” says Yann LeCun, chief AI scientist at Meta. Further, LeCun believes that knowing how actual objects feel will be a critical context for AI assistants (such as Siri or Alexa) if they are to one day assist humans in navigating an augmented or virtual world.

Touch with DIGIT

Machines' current perception of the world is not very intuitive, and there is room for improvement. For starters, machines have only been trained to receive vision and audio data, leaving them unable to receive any other sensory data such as taste, touch, or smell.

That is not how a human works. "We use touch extensively from a human perspective," says Meta AI research scientist Roberto Calandra, who works with DIGIT. "Touch has always been thought to be a sense that would be extremely useful to have in robotics." However, because of technical limitations, widespread use of touch has been difficult."

The goal of DIGIT, according to the research team in a blog post, is to develop a small, inexpensive robot fingertip that can withstand wear and tear from repeated contact with surfaces. They must also be sensitive enough to detect properties, such as surface characteristics and contact forces.

Humans can recognize what they're touching by gauging the general shape of what they're touching. DIGIT uses a vision-based tactile sensor to replicate this.

DIGIT comprises a gel-like silicone pad that sits on top of a plastic square and is shaped like the tip of your thumb. Sensors, a camera, and PCB lights line the silicone in that plastic container. The silicone gel, which resembles a disembodied robot finger, creates shadows or changes in color hues in the emerging image captured by the camera whenever you touch an object with it. The touch it detects is visually expressed.

Calandra explains, "What you really see is the geometrical deformation and geometrical shape of the object you're touching." "You can also infer the forces applying to the sensor from this geometrical deformation."

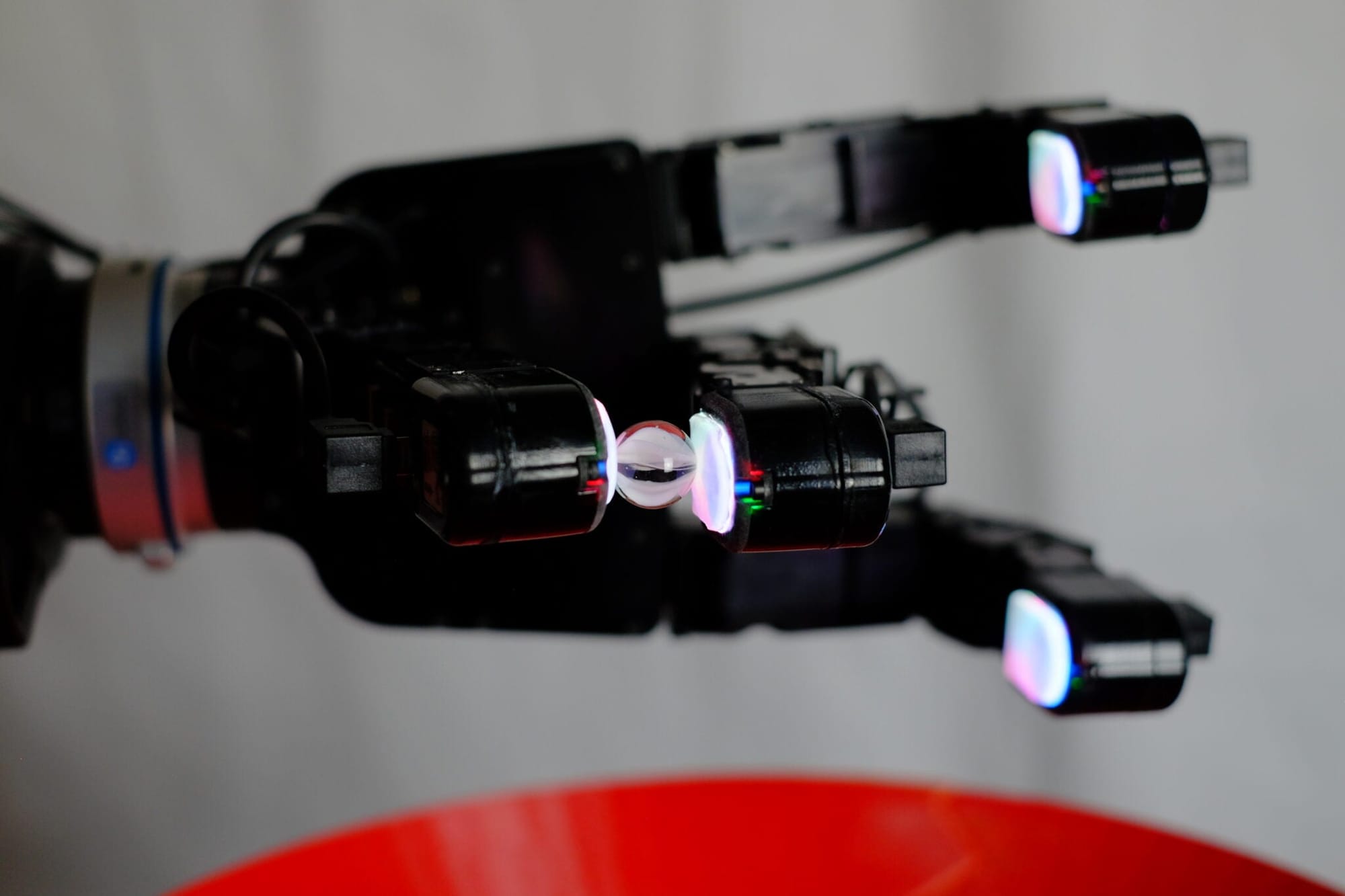

In a 2020 paper, the technology behind DIGIT was open-sourced, and a few examples of how different objects would look with the DIGIT touch were provided. DIGIT can record the imprint of any object it comes into contact with. It can feel the jagged, individual teeth on a small piece of velcro and the small contours on the face of a coin.

However, the team now wants to collect more data on touch, which they won't be able to do on their own.

As a result, Meta AI has teamed up with MIT spin-off Gel Sight to commercialize and sell these sensors to the research community. Calandra estimates the sensors will cost around $15, which is hundreds of times less than most commercially available touch sensors.

Besides the DIGIT sensor, the team is releasing By Touch, a machine learning library for touch processing.

The touch sensor on DIGIT can reveal much more than simply looking at the object. According to Mike Lambeta, a hardware engineer working on DIGIT at Meta AI, it can provide information about the object's contours, textures, elasticity or hardness, and the depth of force that can apply to it. From eggs to marbles, an algorithm can combine this data and provide feedback to a robot on how to pick up, manipulate, move, and grasp various objects.

Feel with ReSkin

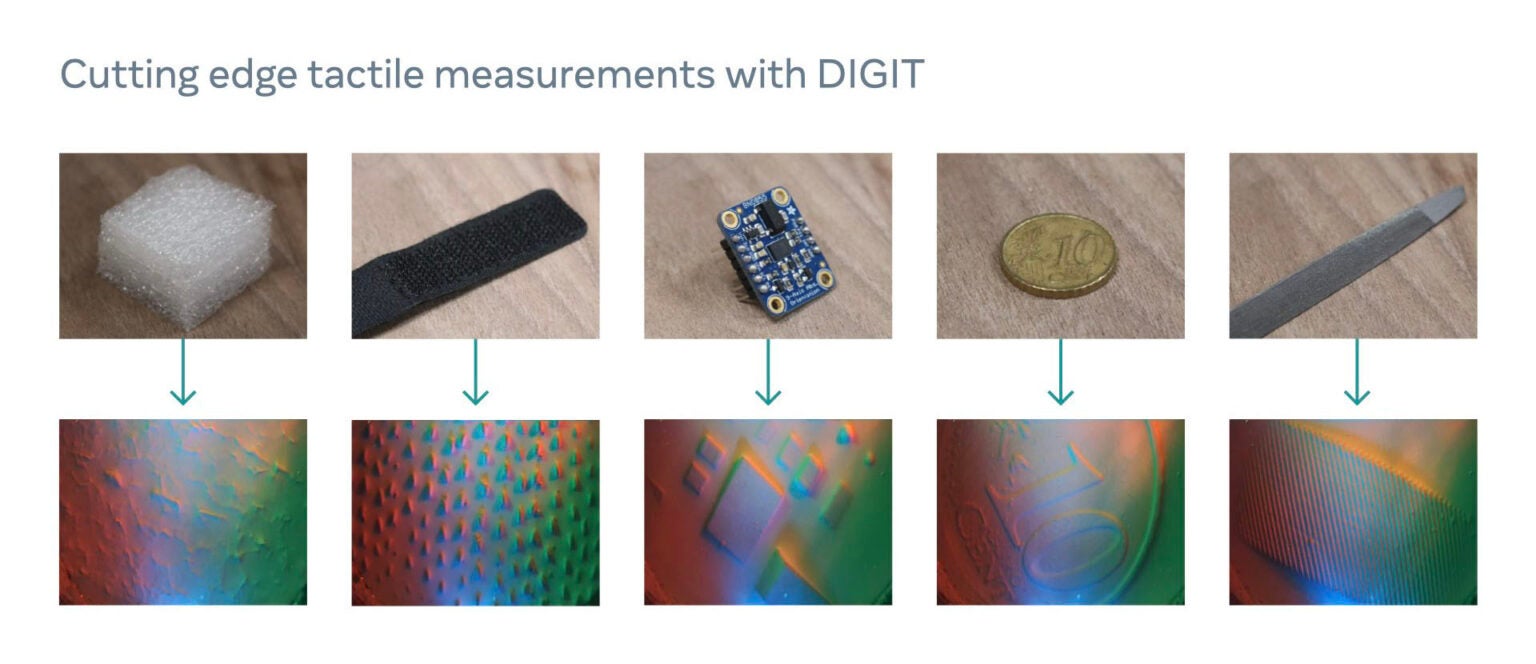

A robot “skin” that Meta AI created with Carnegie Mellon researchers in some ways complements the DIGIT finger.

ReSkin is an inexpensive and replaceable touch-sensing skin that uses a learning algorithm to help it be more universal, meaning that any robotics engineer could in theory easily integrate this skin onto their existing robots, Meta AI researchers wrote in a blog.

It’s a 2-3 mm thick slab that can be peeled off and replaced when it wears down. It can be tacked onto different robot hands, tactile gloves, arm sleeves, or even on the bottom of dog booties, where it can collect touch data for AI models.

ReSkin has an elastomer surface which is rubbery and bounces back when you press on it. Embedded in the elastomer are magnetically charged-particles.

When you apply force to the “skin,” the elastomer will deform, and as it deforms, it creates a displacement in magnetic flux, which is read out by the magnetometers under the skin. The force is registered in three directions, mapped out in the x, y, and z axis.

The algorithm then converts these changes in magnetic flux into force. It also considers the properties of the skin itself (how soft it is, how much it bounces back) and matches it with the force. Researchers have taught the algorithm to subtract out the ambient magnetic environment, which are like the magnetic signature of individual robots, or the magnetic fields at a certain location on the globe.

“What we have become good at in computer vision and machine learning now is understanding pixels and appearances. Common sense is beyond pixels and appearances,” says Abhinav Gupta, Meta AI research manager on ReSkin. “Understanding the physicality of objects is how you understand the setting.”

LeCun teased that it’s possible that the skin could be useful for haptics in the virtual environment, so that when you touch an object, you might get some feedback that tells you what this object is. “If you have one of those pieces of artificial skin,” he says, “then, combined with actuators, you can reconstruct the sensation on the person.”

Certainly, both DIGIT and ReSkin are only preliminary models of what touch sensors could be.

“Biologically, human fingers allow us to really capture a lot of sensors modality, not only geometry deformation but also temperature vibrations,” says Calandra. “The sensors in DIGIT only give us, in this moment, the geometry. But ultimately, we believe we will need to incorporate these other modalities.”

The gel tip mounted on a box, Calandra acknowledges, is not a perfect form factor. There’s more research needed on how to create a robot finger that is as dextrous as a human finger. “Compared to a human finger, this is limited in the sense that my finger is curved, has sensing in all directions,” he says. “This only has sensing on one side.”

Hopes for a multi-sensory enabled “smart” metaverse

Meta has also been improving its AI's sensory library in order to support potentially useful metaverse tools. Meta AI announced a new project called Ego4D in October, which collected thousands of hours of first-person videos that could one day be used to teach artificial intelligence-powered virtual assistants how to help users remember and recall events.

"One big question we're still working on is how to get machines to learn new tasks as quickly as humans and animals," LeCun says. Machines are clumsy, and they take a long time to learn. Reinforcement learning to teach a car to drive itself, for example, must be done in a virtual environment because it would have to drive for millions of hours, cause countless accidents, and destroy itself multiple times before it learned how to navigate. "And even then," he adds, "it probably wouldn't be that reliable."

Humans, on the other hand, can learn to drive in just a few days because we have a pretty good model of the world by the time we reach our teenage years. We understand gravity and the fundamental physics of automobiles.

"The crux of the department here is to get machines to learn that model of the world and predict different events and plan what's going to happen because of their actions," LeCun says.

Similarly, for the AI assistant that will one day interact with humans naturally in a virtual environment, this is an area that needs to be fleshed out.

"Virtual agents that can live in your augmented reality glasses, your smartphone, or your laptop, for example, is one of the long-term visions of augmented reality," LeCun says. "It assists you in your daily life in the same way that a human assistant would, and that system will need to have some understanding of how the world works, some common sense, and be smart enough not to be annoying to converse with." In the long run, that is where all of this research will lead."

You should also check out the following articles:

- What is the metaverse? and how does it work?

- Apple’s first headset will focus on “high-quality” games, reporter claims

- November 26, 2021 is Black Friday. Deals on Video Games and Virtual Reality in 2021

- Facebook wants to build a metaverse. Microsoft is creating something even more ambitious.

- Metaverse’s Cryptocurrency Leaps in Price After Facebook Rebrands as Meta

- Best VR Gifts for Christmas in 2022

- How to succeed in the virtual reality world of tomorrow?

- Best VR Headset cyber Monday 2021

- Books you must read about virtual reality

- Best New Augmented Reality Books To Read In 2021

- virtual reality Life 2029

- According to a report, Meta is considering opening physical stores.

- US$ 4.7 Billion- The global augmented reality gaming market

- The smart glasses revolution is about to get real

- Consumer Brands Reinventing Marketing in the Metaverse

- Imagine Making Money in Rec Room

Subscribe now to our YouTube channel

Subscribe now to our Facebook Page

Subscribe now to our twitter page

Subscribe now to our Instagram

Subscribe To my personal page on linkedin

Subscribe To my personal page on tiktok page for those who love to dance :)

Don't forget to be my friend. Sign up for my friend's letter. So I can tell you ALL about the news from the world of VR&AR, plus as my new friends I will send you my new article on how to make money from VR&AR for free.